Looking Forward

The network has been a long haul. Wow, what a long way we have come from a long time ago, to hubs, to switching and now to networks being virtualized, on hardware, on software and sometimes even on the occasional Raspberry Pi device.

There are so many terms out there, and nobody agrees on what the definition of “SD” anything is. If we go by Wikipedia, they claim ”

“Software-defined networking (SDN) is an approach to computer networking that allows network administrators to programmatically initialize, control, change, and manage network behavior dynamically via open interfaces[1] and abstraction of lower-level functionality.”

That is a little general, isn’t it? I mean how does that concept help a business actually deliver on value? How do I get from “SDN” to business value, without spending millions of dollars and hiring people to internally write “stuff”.

Everyone tried to create something, and as things normally go, everyone said “let’s use this open protocol” – not realizing that the open protocol did about 60% of what we needed in the real world, didn’t have an interface because it is a protocol and we need a gaggle of PHD’s to deploy it.

If you are a developer you are probably reading this thinking – “It is not that hard” – but for some of us, especially traditional network types or managers, it really is that hard, and what about the <1000 user crowd.

VMWare does for the network what it did for servers

This is that kind of thing, VMWare is changing the game, again.

I have to admit, I was not a believer. I was truly the person that sat here and thought “If I want to virtualize my network I want to do it in silicon”. CPU power has reached a point where that argument does not hold water anymore, and we can engineer our way around that anyway, it is a moot point.

Virtualizing Network Hardware Is Different

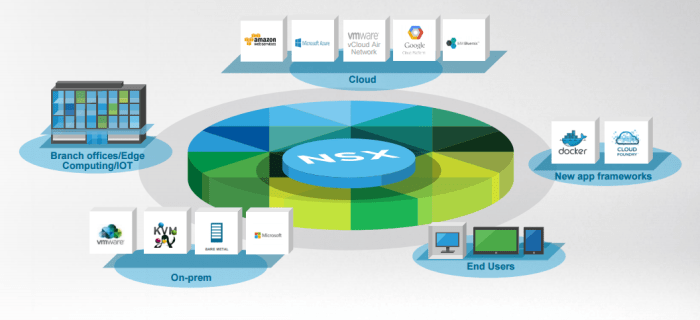

Here is the problem with something like a pure Cisco ACI, or virtualized in the hardware. The entire point of network virtualization is that the network shouldn’t matter. If I want to create a truly elastic infrastructure, then my environment must not care what the transport is underneath.

I am not suggesting the wild west, on the contrary, you still need to monitor, manage and engineer the underlying network to attain the performance you want, but if my intention is to create a Hybrid strategy into cloud services like Azure, AWS, TATA or Long View ODI, I shouldn’t much care. I want to put the workload where I want, when I want, with the security definitions I need, and I don’t want to use 27 different tools to achieve that.

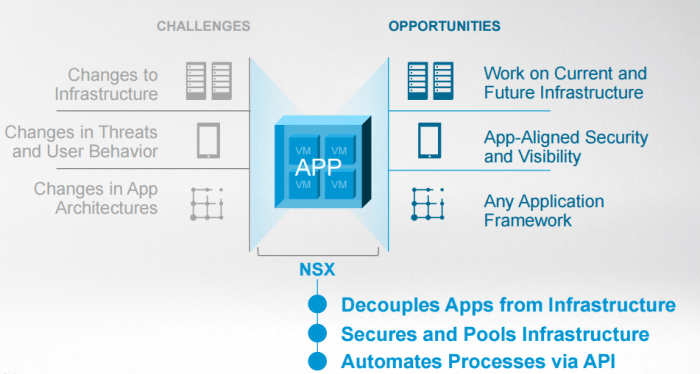

Applications Are The Focus

Everyone is talking this way, Cisco is talking ACI – Application Centric Infrastructure and VMWare is talking NSX, but the concepts are the same. You need the security of your apps and data but you need to deal with changes in threats and user behavior. You need analytics and security.

The APP itself needs to be decoupled from the underlying infrastructure to make things elastic, but to attain the true elasticity, you need an automation platform that does a few things

- Delivers on IT and business process

- Automates to remove mistakes

- Does not require significant programming knowledge

Ideally you need to have all of this in a single pane of glass to make it easy to manage, otherwise, cross management integrations are going to cause you a ton of headaches. When people say “service chaining” I start to get a migraine. Not to say you cannot to that, you can, and they integrate with a huge ecosystem of partners, but I should not have to pick a management platform and then everything else is a partner product.

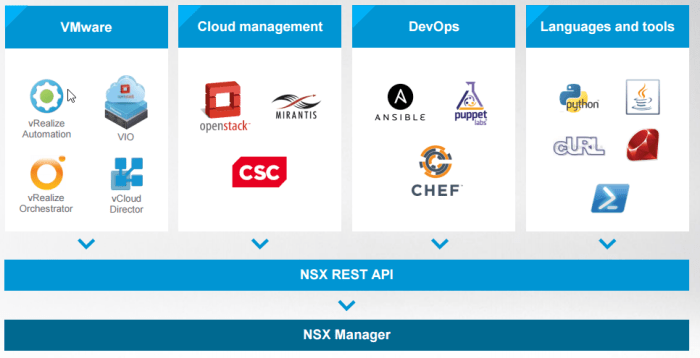

You can go wild if you want

I keep complaining about going “fully open protocol” – but the good ews is, if you want you can go full open protocols, full automation and full custom with NSX if that is what you want. They have the automation tools to get you there. So if you are the developer type, and I am not, feel free to go and get your python on and chef yourself some puppet stacks – I will be over here wishing I honestly understood all that stuff.

Give me the veggies

Here is the story on what you need to know, we will break it down into a few bite sized chunks.

Architecture

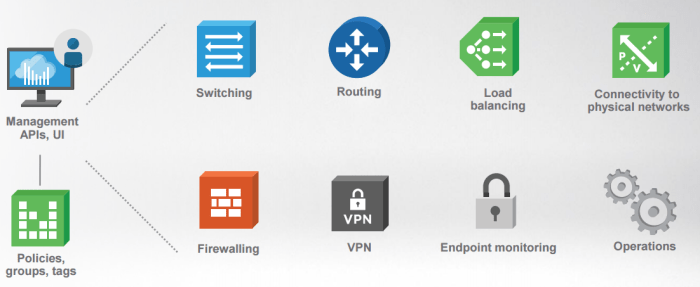

vCenter is still here

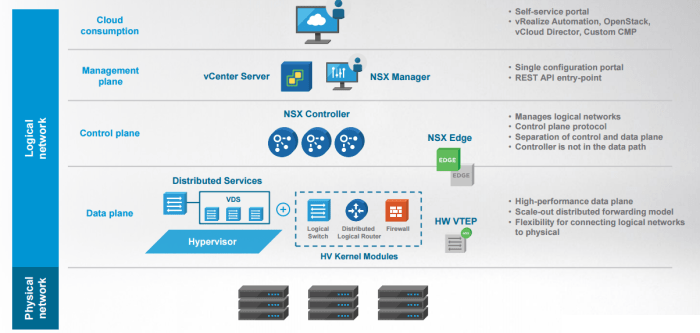

So the big things you need to knows. vCenter is still very much a part of how you live, and NSX Manager plugs into vCentre to give you all the management you know and love. The good news is, they are not reinventing the world here, so if you are already a VCP or VMWare savvy person you should feel right at home.

NSX Controller

The NSX Controller manages the world of NSX, but is configured by the NSX manager plane. All of your logical networks, and control is done here. This isn’t in the data path, it is basically orchestrating the config download to all of the componants. The distributed logical router (fancy name for a virtual router) and the switching endpoints. You don’t really deal with this day to day

Data Plane

This is your hypervisor, and you don’t really change anything here – your connectivity is in place, and the hypervisor knows from the controller which domain each VM is in, and if it needs to be transported between sites and to whom it can talk to. This is where your logical switch, distributed logical router and firewall processes actually live.

Multi-Site Capabilities

This is where I think NSX really shines, not just in the ability to segment, but to take that segmentation and make it elastic across locations. Pick up and move a VM across data centers, and IP addresses do not change, and security constructs remain intact. Doing maintenance in a DC and need a full shut down? No problem, move your workloads and shut it down. Distribute your apps using the built-in load balancer across the network.

The key here is that this works brownfield, no need to lift and shift all of your apps into a new network design to make it work, and no application has to change IP addresses to get this DR functionality. Extend across geographical boundaries, keep your security posture in check.

When moving workloads there is no need to lose your security policies because you are moving workloads around, and you do not pay for NSX DR licenses for active standby, only for active active.

The multi-site capabilities alone are a reason to deploy NSX – and many customers do, even if they are not micro-segmenting their network today, the mobility options alone are worth the price of admission.

Micro-Segmentation

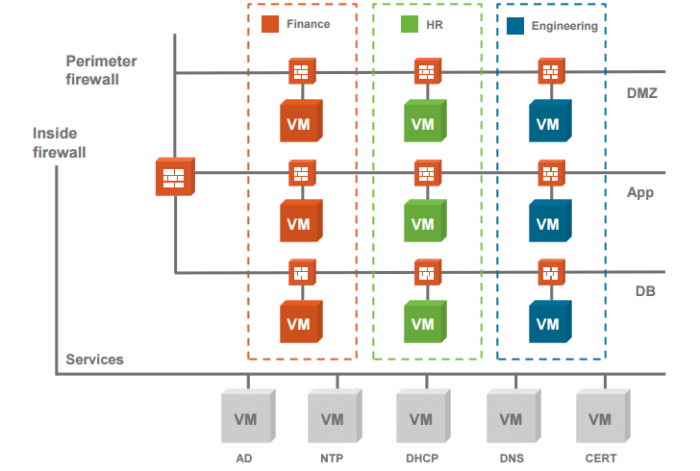

What an industry word, but the bottom line is, we need to segment services from services at the service level – not at the subnet level.

This is a stateful firewall, with full chaining out to IPS/IDS possible, 5 tuple configured.

This is not just ACL, it is a full ALG, so it will take data and control / ephemeral ports and groups them so you do not end up with a giant mess in your rules as well.

A bit of an eye chart, but the idea is that each VM can not be its own perimeter, and policies are created once and then grouped so mistakes against policies are reduced. Threats have a hard time spreading when things are locked down like this.

The firewall manager is very intuitive, basic rules to set everything up, but the challenge is how to setup the rules right?

Policy Creation Costs Reduced with ARM

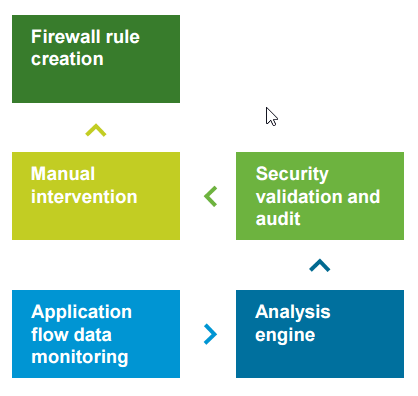

The cost of deploying new policies is significant in many organizations – some spent 10-50x the cost of their firewalls just to come up with the policies to segment subnets, only to end up with giant holes in their firewall rule set.

This is what makes NSX something you can actually deploy, you really need a tool like this in order to put something like NSX in production. Nobody understands application data flows (ok some people do) but there are always mistakes made when segementing your network.

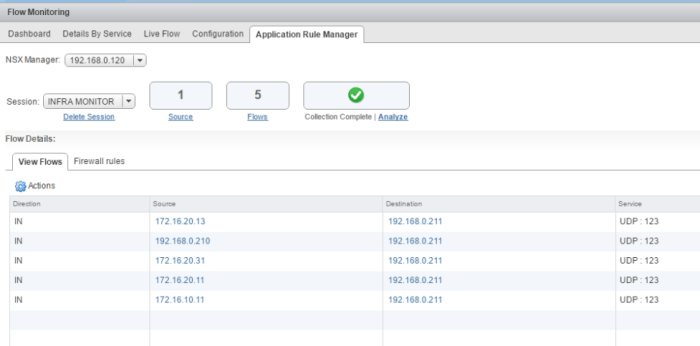

The good news here is something called ARM – Application Rule Manager

Everyone has done this, you set up your rules, set your allow all, watch your syslog for events, then go to deny, monitor your deny logs, anger a few users as things break, fix your firewall logs. There has to be a better way, and there is with ARM.

You can monitor application flows in real time, and then create rule sets from those monitored data flows. ARM has been segregated from normal flow monitoring, so there is no impact to production traffic, and they do limit the number of VM’s you can run ARM on at the same time. You are not supposed to run this all the time.

Remember this is an ALG, so it understands ephemeral ports, and protocols like FTP so if you allow FTP, then FTP will work. Windows RPC is just Windows RPC. All the rules can be cached and setup, without implementation and then you can get your security person to review all of them, approve and then move forward.

Once things are setup, now you can monitor the actual flows, and show packets and bytes so you can see your rules up and working.

Automate with vRealize

The automation within vRealize has been around for some time, but now with the ability to deploy automated NSX rules and pre-defined architectures will provide large organizations with the power to deploy new applications, or even container applications very quickly. The good news is, the interface here is very easy to understand and with a “canvas” style approach you can build out your applications and services in a graphical manner and see relationships with attached policies.

I could honestly go on for while about just automation, but here is a taste of the interface, expect more in another article.

[…] NSX – The Network Redefined […]

LikeLike

[…] NSX – The Network Redefined […]

LikeLike

[…] NSX – The Network Redefined (CANTECHIT) […]

LikeLike

This iss a great post thanks

LikeLike